Enterprise AI Is Not a Software Deployment Problem

What Beer, Maturana, and Heidegger reveal about why implementation fails

What Beer, Maturana, and Heidegger reveal about why implementation fails

Most writing about enterprise AI is trapped in one of two modes.

The first is vendor theater: a breathless parade of copilots, agents, orchestration layers, and "transformative workflows" rendered in the smooth, bloodless prose of a keynote deck. The second is managerial cope: some variation on "AI won't replace people, but people who use AI will replace people who don't." Both capture something real about the discourse. What they miss is the substance of implementation itself.

Enterprise AI is usually described as though a company has a need, a tool appears, the tool gets rolled out, and value either materializes or doesn't. This flatters everyone involved. It flatters executives because it makes the problem sound purchasable. It flatters vendors because it makes the solution sound shippable. It flatters consultants because it makes them sound like installers of the future.

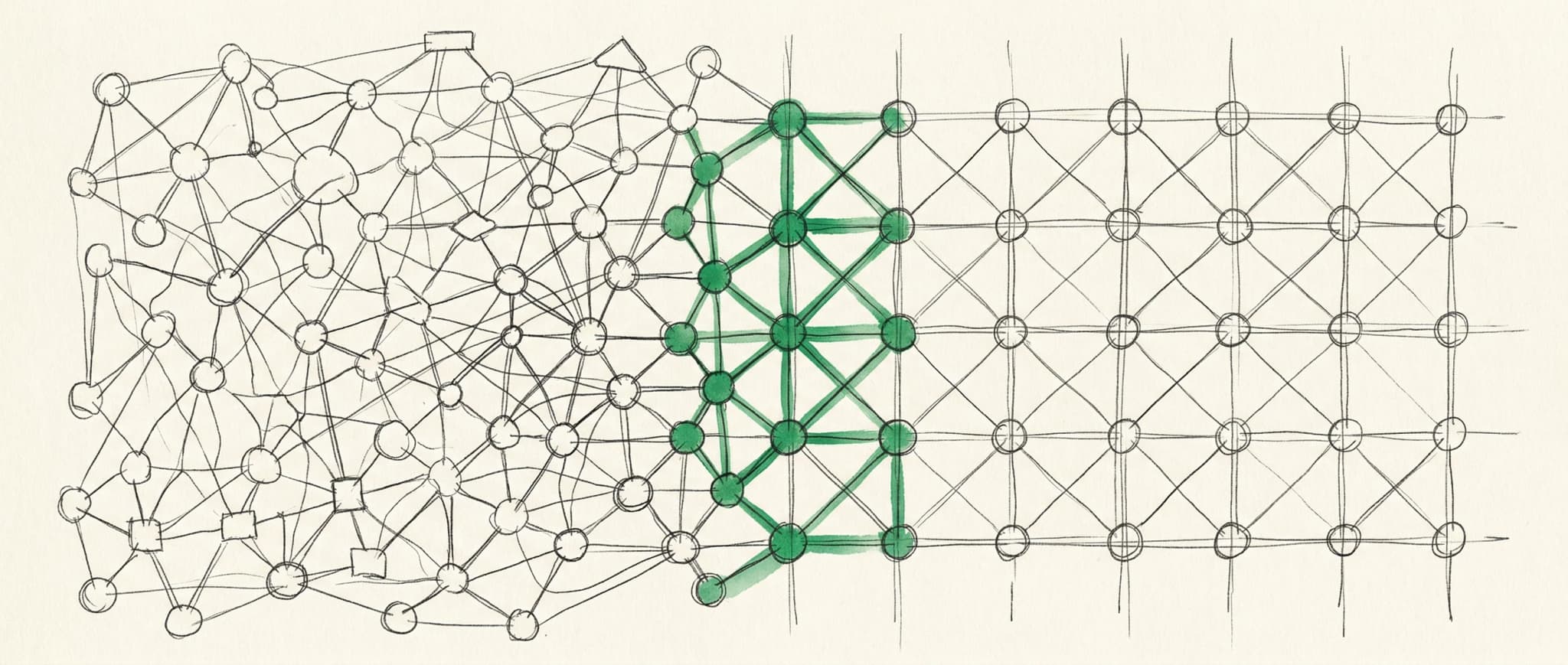

What is actually happening is stranger and more difficult. Enterprise AI implementation is a problem of system variety, mutual adaptation, and tool transparency. Most organizations are trying to solve a high-variety coordination problem with a low-variety tool, then acting surprised when the result is a faster way to produce confusion.

Three thinkers offer a better map: Stafford Beer, Humberto Maturana, and Martin Heidegger. Each comes from a different discipline — cybernetics, biology, phenomenology — but together they give a sharper account of what breaks, and what success feels like.

1. Stafford Beer: most AI projects fail from insufficient variety

Start with Stafford Beer's law of requisite variety1: only variety can absorb variety.

In plain English, a system that regulates a messy environment must be at least as behaviorally rich as the environment it is trying to regulate. If the environment throws ten kinds of surprises at you and your control structure only knows how to handle three, you do not have a solution. You have a ritual.

This is a decent description of most enterprise AI rollouts.

The environment in question is a jungle of exceptions, tacit knowledge, social norms, inter-team dependencies, local hacks, legacy systems, unstated incentives, and politically sensitive edge cases. Work does not occur in the clean geometry of the demo. It occurs in the slurry. A contract review process is precedent, escalation logic, risk tolerance, client sensitivity, seniority gradients, unspoken red lines, last-minute changes, and the memory of the one time legal got burned two years ago.

Then into this environment comes a tool with one chat box and a sales page.

This is the original sin of enterprise AI: teams mistake the existence of a generally capable model for the existence of a sufficiently varied organizational regulator. They buy a low-variety intervention for a high-variety problem.

Beer's broader Viable System Model makes the diagnosis sharper. Most companies adopt AI at the operational layer and stop there. They increase the productive capacity of local units. More drafts. More summaries. More analysis. More output. But they neglect the layers above and around operations: coordination, intelligence, policy, governance. In Beer's terms, they juice System 1 and starve Systems 2 through 5.

When variety floods into the operational layer without corresponding upgrades to coordination infrastructure above it, the result is hyperactivity without coherence.

Teams move faster but align worse. More people can produce more things more quickly, but the organization becomes noisier, not smarter. Documents proliferate. Decisions splinter. Experiments multiply. Exceptions pile up. Managers begin to feel that everyone is working harder while the system as a whole is somehow getting stupider.

This is what happens when a new source of variety is introduced into a system without upgrading the mechanisms that coordinate and constrain it.

AI accelerates execution and amplifies the penalties for weak coordination in equal measure.

So many implementations end up feeling superficially successful and strategically hollow. The tool works. The system doesn't.

2. Maturana: implementation is mutual adaptation, not installation

Even that cybernetic framing can mislead. Taken too far, it makes implementation sound like a matter of fitting the right controller to the right environment. Organizations are living systems, though, and AI reconfigures them in ways no thermostat model captures.

Humberto Maturana offers a better lens: structural coupling.2

The basic idea is that two systems interacting recurrently do not simply transmit commands to one another. They perturb each other. Over time, through repeated interaction, each adapts in ways conditioned by its own structure. Neither system determines the other. They co-evolve.

This describes enterprise AI work far better than the standard account.

The naïve story says: the company adopts AI, and the AI changes the company. Half the story.

What really happens is that the organization perturbs the tool through prompts, workflows, permissions, feedback habits, conventions, constraints, and selective use. At the same time, the tool perturbs the organization by changing response times, expectations, visibility, quality bars, decision rhythms, and who is suddenly capable of doing what. These perturbations accumulate. Work gets re-partitioned. Roles blur. New bottlenecks appear. Old forms of expertise lose some status; others become more valuable. Language changes. People begin offloading certain kinds of cognition and guarding others more jealously.

Successful implementation is the gradual emergence of a viable coupling.

"Training" is such a weak word for this work. Good onboarding is an intervention in the coupling dynamics between a new cognitive artifact and an existing organizational form.

That is what many consultants are doing when they are doing their job well, whether they know the phrase or not. They are helping the organization discover where its habits, incentives, and workflow boundaries admit or resist the new tool. They are helping the tool acquire wrappers, prompts, conventions, and rituals that fit the local ecology. They are facilitating repeated interactions until something stable emerges.

The best implementation sessions do not end with "now everyone knows how to use the product." They end with the first signs of mutual adaptation: people have changed how they approach the work, and the tool has been socially and procedurally shaped into something the organization can metabolize.

Seen this way, the fantasy of a perfectly self-onboarding AI platform is premature. Organizations lack the self-knowledge to specify the conditions under which the software should become part of real work. The coupling has to be grown.

And because coupling is path-dependent, those early sessions matter disproportionately. The first ways a team learns to use a model often become the local grammar of AI. Sloppy early coupling creates durable mediocrity. Good early coupling creates compounding advantage.

3. Heidegger: the best AI disappears

Heidegger offers the third piece, and probably the most useful one for practitioners: a way to describe what successful integration feels like from the inside.

He distinguishes between tools that are present-at-hand and tools that are ready-to-hand.3 A present-at-hand object is thematized, inspected, noticed as an object. A ready-to-hand tool recedes into use, becoming the medium of action. You are hammering, not contemplating the hammer.

This distinction is the cleanest way I know to talk about successful AI implementation.

Most AI tools in the enterprise remain present-at-hand. They are conspicuous. Users think about them constantly. They scrutinize outputs, babysit prompts, wonder how to phrase things, compare models, second-guess hallucinations, and feel the system as a separate layer added on top of the work. In that mode, the AI is still an object. However powerful it may be, it has not yet become part of fluent action.

The best AI disappears.

Users still know it's there. What changes is that it stops being the thing they're orienting toward. Attention returns to the task. The model becomes an extension of intention rather than a novelty demanding supervision.

This is a more demanding standard than "adoption." Plenty of teams "adopt" tools that never become ready-to-hand. They use them dutifully, intermittently, or ceremonially. The tool remains conspicuous because it misfires, resists context, or requires too much interpretive labor. It never crosses the threshold from impressive object to transparent instrument.

And Heidegger helps explain failure too. Tools become conspicuous when they break. When the hammer snaps, the hammer becomes an object again. Likewise, AI becomes painfully present-at-hand when it misses context, fabricates confidence, mangles local conventions, or requires elaborate workaround rituals to produce something usable. The more a user has to think about the machinery, the less the machinery has been integrated into the work.

Transparency is what implementation is actually trying to achieve, not raw capability.

The highest compliment for an enterprise AI system sounds more like: "I barely think about it anymore; it's just how the work moves." Fluency is the phenomenology of success.

How the frames converge

These three frames fit together more tightly than they first appear.

Beer tells us the system must have enough variety to absorb the complexity of the environment. Maturana tells us the fit cannot simply be imposed; it has to emerge through repeated reciprocal adaptation. Heidegger tells us what that success looks like at the level of lived use: the tool recedes into the task.

Together they give us a much better theory of enterprise AI:

First, the organization must build enough coordination and governance capacity around the tool to avoid becoming faster and dumber.

Second, the organization and the tool must be allowed to co-adapt through use, rather than treated as static entities joined by procurement.

Third, the implementation must aim for transparency over spectacle: the model should dissolve into skilled action rather than remain a permanent object of anxious attention.

"AI transformation" underdelivers for the same reason across company after company. Firms misunderstand the category of problem they are solving.

They think they are buying cognition. Often they are really buying a new source of organizational disturbance.

Whether that disturbance becomes leverage or noise depends less on the model's benchmark scores than on whether the surrounding system can absorb its variety, adapt to its presence, and eventually let it disappear into use.

What this means for people doing implementation work

If you are implementing AI in organizations, your job is to increase the organization's effective variety, guide the early stages of structural coupling, and move the tool toward ready-to-hand transparency. Installing software and explaining features is the easy part.

That means asking different questions — design questions, not product questions:

- Where does work vary?

- Where do exceptions pile up?

- Where do coordination failures happen?

- What norms and policies need to exist around this new capability?

- What patterns of interaction will let the organization and the tool adapt to each other?

- At what point does the AI stop feeling like an extra step and start feeling like part of the work?

These are design questions. Political questions. Epistemic questions. Sometimes even anthropological ones.

Enterprise AI is an organizational cognition problem wearing a software costume.

And until more companies understand that, the market will remain flooded with a familiar product: expensive new tools dropped into old systems that lack the variety to absorb them, the patience to adapt with them, and the discipline to make them disappear.

An expensive, efficient way to be incoherent.

Footnotes

-

Ashby's Law of Requisite Variety, formalized in An Introduction to Cybernetics (1956) by W. Ross Ashby. The full formulation: the variety in a control system must match the variety in the system being controlled, or regulation fails. Beer extended Ashby's logic into organizational design through the Viable System Model. ↩

-

In Maturana and Varela's framework, two systems are structurally coupled when recurrent interactions cause each to adapt in ways shaped by its own internal organization. Neither system determines the other. What happens in a coupled system is mutual perturbation, with each system responding according to its own structure rather than the other's intent. ↩

-

Heidegger's canonical example is the hammer. When hammering works, you don't perceive the hammer. Your attention is on the nail, the board, the joint. The hammer is ready-to-hand: transparent, absorbed into action. When it breaks or is missing, it suddenly becomes an object you notice and think about. The breakdown reveals what was previously invisible. ↩