Scoring Systems as Strategy

The CMF framework applied beyond job search

The CMF framework applied beyond job search

I built a scoring system to fix my job search. Response rate went from 5% to 40% in six weeks. But somewhere around week four, I realized the interesting part wasn't the job search at all.

The interesting part was watching my own priorities become visible for the first time.

When I forced myself to assign weights to dimensions like "company reputation" versus "role fit," I discovered I'd been lying to myself. I thought I cared most about company brand. The weights said otherwise. Role fit got 32%, company tier got 18%. The numbers didn't match my story.

That's the real value of explicit scoring. You discover what you actually believe.

Since then, I've applied the same pattern to lead scoring, feature prioritization, hiring decisions, and content greenlight processes. The domains are different. The pattern is identical. And the core insight remains: explicit scoring exposes hidden assumptions and forces organizational debate in ways that gut feel never can.

The Problem: Gut Feel Doesn't Scale

You've sat in the meeting. Everyone agrees "we should prioritize the important stuff." Then comes the actual list, and suddenly three people are arguing about what "important" means.

One person thinks revenue impact is everything. Another thinks user requests should drive decisions. A third keeps bringing up technical debt. Nobody's wrong. They're just weighting different factors.

Gut feel works fine for individual decisions. But it breaks when:

- Volume increases: You can't carefully evaluate 50 candidates, 200 leads, or 300 feature requests

- Teams disagree: Different people have different mental models of "good"

- Mood affects judgment: Tuesday you're optimistic, Thursday you're conservative

- Memory fades: "Why did we pick that one?" becomes unanswerable

The fix isn't better intuition. It's making the intuition explicit.

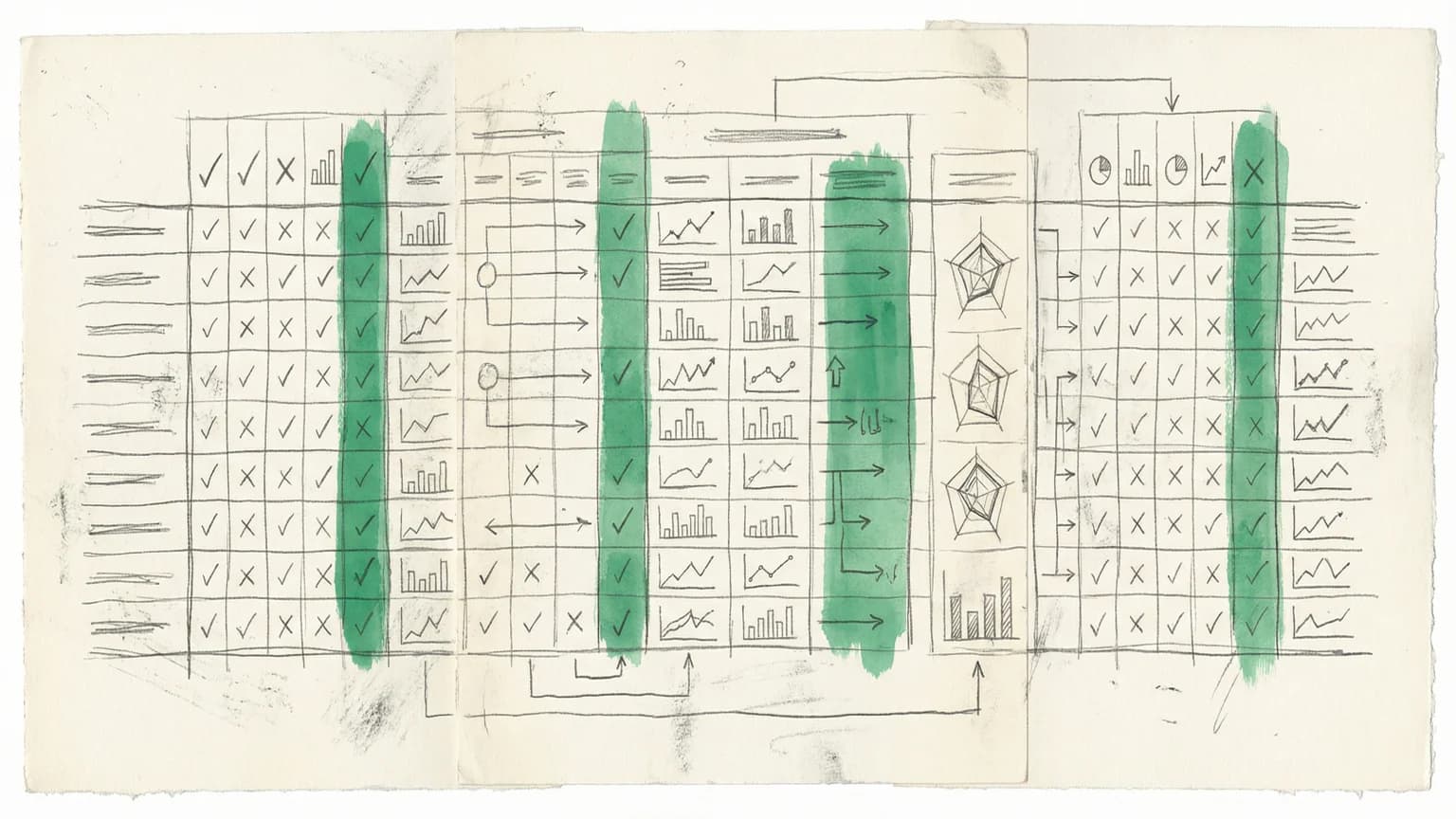

The Pattern: Dimensions + Weights + Thresholds

Every scoring system I've built follows the same structure:

Dimensions are the factors you care about. For a job posting: role fit, company quality, location, timing. For a sales lead: company size, engagement signals, budget indicators. Pick 4-7. Fewer than that, you're oversimplifying. More than that, you're diluting the signal.

Weights force prioritization. You have exactly 100% to distribute. Want company size at 30%? Fine, but that leaves only 70% for everything else. This is the magic part. You can't say "everything matters equally" when the math won't allow it.

Thresholds prevent "well, maybe" decisions. Below 50? Auto-decline. Above 75? Fast-track. The threshold removes the temptation to endlessly deliberate on marginal cases.

The formula:

Total Score = Σ (Dimension Score × Weight)

Each dimension outputs 0-100. Multiply by weight. Sum the results. Done.

The power isn't in the arithmetic. It's in the conversation required to set the weights.

Domain 1: Lead Scoring

The sales team was drowning. Marketing generated 400 leads a month. Sales capacity was 80 conversations. Nobody agreed on which 80 to pick.

The VP of Sales wanted "big logos." The SDR team wanted "high engagement." Marketing wanted "perfect ICP match." All reasonable. All different.

Here's what we built:

| Dimension | Weight | Scoring Logic |

|---|---|---|

| Company Size | 30% | Enterprise (1000+): 100 / Mid-market (100-999): 70 / SMB (<100): 40 |

| Engagement | 25% | Pricing page + demo request: 100 / 3+ page views: 70 / Blog only: 40 / Single visit: 20 |

| ICP Fit | 25% | Target industry + function + title: 100 / Two matches: 70 / One match: 40 / None: 10 |

| Timing | 20% | "Active evaluation": 100 / "Starting research": 60 / "Just browsing": 30 / Unknown: 20 |

The weights tell a story. Company size at 30% means we were optimizing for deal size over volume. Engagement at 25% was a compromise after the SDR team argued for 35%. Timing at 20% was deliberately low because it's the easiest dimension to game.

Threshold: 65. Below that, marketing nurture only. Above that, sales gets the lead.

The Real Value

The real value wasn't the automation. It was the debate.

The VP had to justify why company size deserved 30% instead of the 40% he initially wanted. The SDR manager had to defend why engagement signals were reliable. Marketing had to explain why ICP fit should matter at all.

By the end, everyone understood the model. When someone complained about lead quality, we could point to specific weights. "You want more engaged leads? Increase engagement from 25% to 35%. Here's what that does to your pipeline mix."

The arguments became productive because they had a shared vocabulary.

Domain 2: Feature Prioritization

Product teams love frameworks. RICE. MoSCoW. ICE. They're all scoring systems with fixed weights.

The problem with fixed frameworks is they encode someone else's priorities. RICE says Reach, Impact, Confidence, and Effort all matter. But how much? That depends on your strategy.

Here's the system I built for a B2B SaaS product post-Series B:

| Dimension | Weight | Scoring Logic |

|---|---|---|

| User Impact | 35% | Solves top-3 pain point: 100 / Top-10 pain point: 70 / Nice-to-have: 40 / No signal: 10 |

| Effort (inverse) | 25% | < 1 week: 100 / 1-2 weeks: 80 / 2-4 weeks: 50 / 1-2 months: 30 / > 2 months: 10 |

| Strategic Alignment | 25% | Direct path to annual goal: 100 / Supporting goal: 60 / Tangentially related: 30 / Unrelated: 10 |

| Revenue Impact | 15% | Unlocks new segment: 100 / Expands accounts: 80 / Reduces churn: 60 / Indirect: 30 |

User Impact at 35% was highest because we were in a competitive market. Features solving real pain points created switching costs. Strategic Alignment at 25% kept us honest about saying no. Revenue Impact at 15% was deliberately low because chasing revenue directly led to building features for individual customers that didn't scale.

Threshold: 70 for "next quarter," 50 for "backlog," below 50 for "not now."

Weights Change With Strategy

Six months earlier, when we were still searching for PMF, the weights looked different:

| Dimension | Old Weight | New Weight |

|---|---|---|

| User Impact | 40% | 35% |

| Effort (inverse) | 30% | 25% |

| Strategic Alignment | 15% | 25% |

| Revenue Impact | 15% | 15% |

Early stage: ship fast, learn from users. Later stage: align to strategy, make bets that compound.

The system didn't change. The weights did. That's the point.

Domain 3: Hiring Decisions

Hiring is where gut feel does the most damage. Interviewers walk out of a conversation with a strong opinion and no ability to explain it. "I just don't see it." "Something felt off." "Great culture fit." None of this helps the hiring committee make a decision.

A scoring system doesn't eliminate bias, but it surfaces it.

For a senior PM role:

| Dimension | Weight | Scoring Logic |

|---|---|---|

| Skills Match | 40% | All must-haves demonstrated with examples: 100 / Most demonstrated: 70 / Gaps: 40 / Major gaps: 20 |

| Culture Add | 30% | New perspective + values alignment: 100 / Strong values alignment: 70 / Adequate: 50 / Concerns: 20 |

| Growth Potential | 30% | Clear trajectory + rapid learning evidence: 100 / Steady progression: 70 / Unclear: 40 / Stagnant: 20 |

Note the language: "Culture add," not "culture fit." Fit implies you want people like the existing team. Add implies you want people who make the team better in new ways.

Skills Match at 40% was largest because this was a senior role. Culture Add at 30% captured whether this person makes the team stronger in ways we don't currently have. Growth Potential at 30% was about the two-year bet.

Each interviewer scores their assigned dimensions independently. Then we compare.

Structured Disagreement

The value isn't agreement. It's disagreement with specificity.

"You gave her a 50 on culture add. Walk me through that."

"She talked about preferring consensus decision-making. We're a company that moves fast. I don't think that's a values issue, but it might slow her down."

Now we have something to discuss. We're debating something concrete rather than trading gut feelings.

Threshold: 70. Below that, pass. Above 70, offer. Above 85, move fast.

Domain 4: Content Greenlight

I worked with a media company that greenlit around 20 projects a year out of 200 pitches. The selection process was a mystery. Nobody could explain why Project A beat Project B.

We built this:

| Dimension | Weight | Scoring Logic |

|---|---|---|

| Expected Value | 30% | P(success) × potential reach: calculated formula |

| Audience Fit | 25% | Core audience, known interest: 100 / Core, new topic: 70 / Adjacent: 50 / New audience: 25 |

| Production Risk | 25% | Proven team + clear scope: 100 / One of two: 60 / Neither: 20 |

| Strategic Timing | 20% | Cultural moment exists: 100 / Evergreen: 60 / Saturated: 30 / Unclear: 40 |

Expected Value at 30% used probability estimation. A long-shot project (20% success probability) with massive potential reach (10M) scored the same as a safe bet (80% success probability) with modest reach (2.5M). The company wanted big swings.

Production Risk at 25% was surprisingly important. The data showed production risk killed more projects than mediocre ideas. An okay concept with a proven team shipped. A brilliant concept with an unproven team often never shipped at all.

Threshold: 60 for "develop further," 75 for "greenlight," below 50 for "pass."

Exposing Philosophical Debates

The weights exposed a debate the company hadn't realized it was having. Should production risk be weighted equally with audience fit? That means a safe, boring project with a proven team could beat an exciting, risky project with a new director.

The executive team split. Half wanted production risk at 35%. Half wanted it at 15%. They compromised at 25%, but the conversation itself was valuable. The company had to articulate its risk tolerance in a way it never had before.

Why Transparency Beats Flexibility

Every time I propose scoring systems, someone pushes back. "I don't want to be constrained by a formula." "I trust my judgment."

Fair concerns. Wrong conclusion.

The constraint is the feature, not the bug. When you're forced to assign weights, you discover your real priorities. Maybe you learn that "revenue impact" deserves 40%, not the 20% you assumed. Maybe you find out that "strategic alignment" has been eating all your decisions at an implicit 50%. Either way, you know more than you did.

The formula doesn't replace judgment. It captures judgment in a form that can be examined, debated, and improved.

When weights become explicit, they become organizational debate. "Should we really weight company size at 30%?" These are strategy conversations disguised as arithmetic. They force the team to articulate priorities that otherwise stay hidden.

And here's the thing about flexibility: most of the time, "I want flexibility" means "I want to make exceptions without justifying them." The scoring system doesn't forbid exceptions. It just makes them visible. You can override a score. You just have to explain why.

Transparency doesn't eliminate politics. It makes politics accountable.

Implementation Notes

Start with spreadsheets. Don't build software until you've validated the dimensions and weights with real data. Score 20-30 items manually. See what feels wrong. Adjust. Only automate after you've calibrated.

Expect resistance. "This feels mechanical." "You can't reduce decisions to numbers." The response: "We're not reducing decisions to numbers. We're making our reasoning visible." People come around when they see the debates improve.

Review weights quarterly. Priorities change. The dimensions probably stay stable. The weights need recalibration.

Preserve the breakdown. The total score is for sorting. The breakdown is for understanding. When something scores 55, you need to see it's 90 on strategic fit but 20 on effort. That's actionable.

Watch for gaming. Any system can be gamed. Lead scoring gets gamed by marketing teams who optimize for score instead of quality. Feature prioritization gets gamed by PMs who inflate confidence. Review outliers regularly. If everything scores 90+, your dimensions are broken.

When Not to Score

Scoring systems work best for decisions that are:

- Repeated: You'll make this decision many times

- Comparable: Items can be evaluated on the same dimensions

- Volume-dependent: Too many options to evaluate deeply

- Team-based: Multiple people need to align

They work poorly for unique decisions (acquisitions, pivots), rapidly changing contexts (criteria shift weekly), and simple choices (only 3 options, just discuss them).

The Meta-Lesson

The CMF pattern teaches something beyond any single domain: your decisions reveal your values, whether you articulate them or not.

When you assign weights, you're not creating priorities from nothing. You're surfacing priorities that already exist in your head. The act of writing down "user impact: 35%, effort: 25%" forces you to confront the implicit trade-offs you've been making all along.

I thought I cared most about working at a prestigious company. The scoring exercise revealed I actually cared most about the work itself. Role fit was at 32%. Company tier was at 18%. My actions hadn't matched my words.

Explicit systems beat implicit assumptions. Not because the systems are smarter, but because they're visible. You can argue with visible. You can improve visible. Hidden assumptions just run the show without your permission.

Build the scorecard. Assign the weights. Discover what you actually believe.

Then have the conversation about whether those beliefs are right.