The AI Coding Factory Playbook

How engineering teams set up and run AI coding agents at scale, reverse-engineered from Stripe, Spotify, Ramp, OpenAI, and Cursor

How engineering teams set up and run AI coding agents at scale, reverse-engineered from Stripe, Spotify, Ramp, OpenAI, and Cursor.

Stripe merges over 1,300 agent-written pull requests per week.1 Spotify has shipped 1,500+ AI-generated PRs and attributes ~50% of its merged code to agent automation.2 Ramp reached 30% of all merged PRs from its internal agent within a couple of months of launching.3 OpenAI built a 1-million-line codebase with a team of seven engineers and zero manually-written code.4

These are not experiments. They are production systems at scale. And every one of them has published how they work.

This playbook synthesizes those architectures into a single decision framework. It is organized by decision area — not by company — and answers the question every engineering team is now facing: how do we actually do this?

How to use this document

This playbook covers 15 decision areas. The maturity model at the end maps everything to phases — if you want to know where to start, start there.

Quick reference

| I want to... | Go to |

|---|---|

| Get started today | Phase 0 checklist |

| Decide local vs. cloud | Local vs. cloud decision |

| Write good prompts | Prompting and task design |

| Know what NOT to use agents for | When not to use agents |

| Understand costs | Cost and budget |

| See real-world metrics | Metrics from the field |

For a small team: One person owns AGENTS.md. One person reviews agent PRs. Everyone learns prompting. Start with one-shot tasks on well-scoped work.

1. Execution environment

The foundational question: where does the agent run?

The consensus

Every organization that has published its architecture chose the same pattern: the agent gets a full development environment — not a thin sandbox, not a code-only container, but a complete replica of what a human developer works in.

- Stripe: Pre-warmed isolated devboxes on AWS EC2, spin up in 10 seconds, Stripe code and services pre-loaded. Isolated from production and the internet.5

- Ramp: Sandboxed VM per session on Modal. Vite, Postgres, Temporal, full stack. File system snapshots for freeze/restore.6

- Spotify: Containerized execution, highly restricted. "The agent runs in a container with limited permissions, few binaries, and virtually no access to surrounding systems."7

- OpenAI: App bootable per git worktree, each Codex run in a fully isolated version of the app including logs and metrics.8

- Cursor: Each agent gets its own copy of the repo on a large Linux VM.9

Where they diverge

| Choice | VM (Stripe, Ramp) | Container (Spotify) | Shared machine (Cursor) |

|---|---|---|---|

| Isolation | Strongest (kernel-level) | Moderate (shared kernel) | Weakest (process-level) |

| Startup speed | 10s (Stripe), near-instant (Ramp/Modal) | Fast | Fast (already running) |

| Cost | Higher per session | Lower | Lowest |

| Security | Best for production orgs | Adequate for sandboxed scope | Research only |

ONA's CTO on this: "Ideally that's a VM as opposed to a container that's sharing a kernel for security and reproducibility reasons."10

Getting started

- Define the image: What does a developer need to run the app, run tests, and hit internal APIs? That's your agent image.

- Pre-build everything: Ramp builds images every 30 minutes. "Move as much as you can to the build step... Even things like running your app and test suite once are helpful, as these may write cached files that a second run will make use of."11

- Snapshot and restore: When a session ends, snapshot the state. Restore on follow-up. This avoids a full clone on every interaction.

- Warm sandbox pools: "Warm the sandbox as soon as a user starts typing their prompt. Keep a pool of warm sandboxes for high-volume repositories."12

Decision: local vs. cloud {#decision-local-vs-cloud}

If you are a small team and can tolerate agents running on developer machines, start there. Use worktrees for isolation: git worktree add ../task-name -b feat/initials-task. This is the cheapest path and requires zero infrastructure.

If you need agents to run while developers are offline, or you need parallel sessions beyond what a laptop can support, move to cloud-hosted VMs. The industry is moving here rapidly.

2. Context engineering

How does the agent know what to do and how to do it?

The spectrum

Organizations fall on a spectrum from minimal context (just the codebase) to heavy pre-loaded context (rules, docs, tool output). No one loads zero context. No one loads everything.

What to put in context

| Layer | Content | Who does this |

|---|---|---|

| Map (always loaded) | Short AGENTS.md or CLAUDE.md pointing to deeper docs. Build commands, directory structure, key patterns. | OpenAI: ~100-line AGENTS.md as "table of contents." Spotify: large static prompts. |

| Rules (conditional) | Coding conventions, ownership zones, architecture constraints. | Stripe: "Almost all rules conditionally applied by subdirectory." Avoids irrelevant rules flooding context. |

| Task context (per-run) | The specific ticket, issue, or specification. File list. Related docs. | Spotify: "Condense relevant context into the prompt up front." Stripe: Pre-hydrate from Slack thread and linked docs. |

| Live state (tools) | Build output, test results, lint errors, telemetry. | Spotify: MCP tools for verify, git, bash. Ramp: Sentry, Datadog, LaunchDarkly, feature flags. |

What does not work

- One giant instruction file. OpenAI tried it. "A monolithic manual turns into a graveyard of stale rules. Agents can't tell what's still true, humans stop maintaining it."13

- Too many tools. Stripe has 400+ internal MCP tools but curates down to ~15 per run. "Prevent 'token paralysis' — where models become overwhelmed by too many options."14

- Dynamic code search as primary context. Spotify deliberately avoids it: "We don't currently have code search or documentation tools exposed to our agent. We instead ask users to condense relevant context into the prompt up front."15

Static vs. dynamic context

Spotify makes the strongest case for static prompts: "We prefer to have larger static prompts, which are easier to reason about. You can version-control the prompts, write tests, and evaluate their performance."16

Stripe takes a hybrid approach with "Blueprints": orchestration flows that alternate deterministic code nodes with agent loops. Context is pre-fetched deterministically before the agent loop starts.17

OpenAI uses progressive disclosure: "Give Codex a map, not a 1,000-page instruction manual." Short AGENTS.md points to a structured docs/ directory. Agent navigates deeper as needed.18

Getting started

- Write an AGENTS.md (or CLAUDE.md) under 200 lines. Include: build commands, directory structure, key patterns, ownership zones, what NOT to touch.

- If you have a large codebase, scope rules by subdirectory. Don't apply everything globally.

- For each agent task, define the end state explicitly. "Make this code better" is not a prompt. The agent needs a verifiable goal.19

- Pre-load the minimum context needed. Add more only when agent output quality suffers without it.

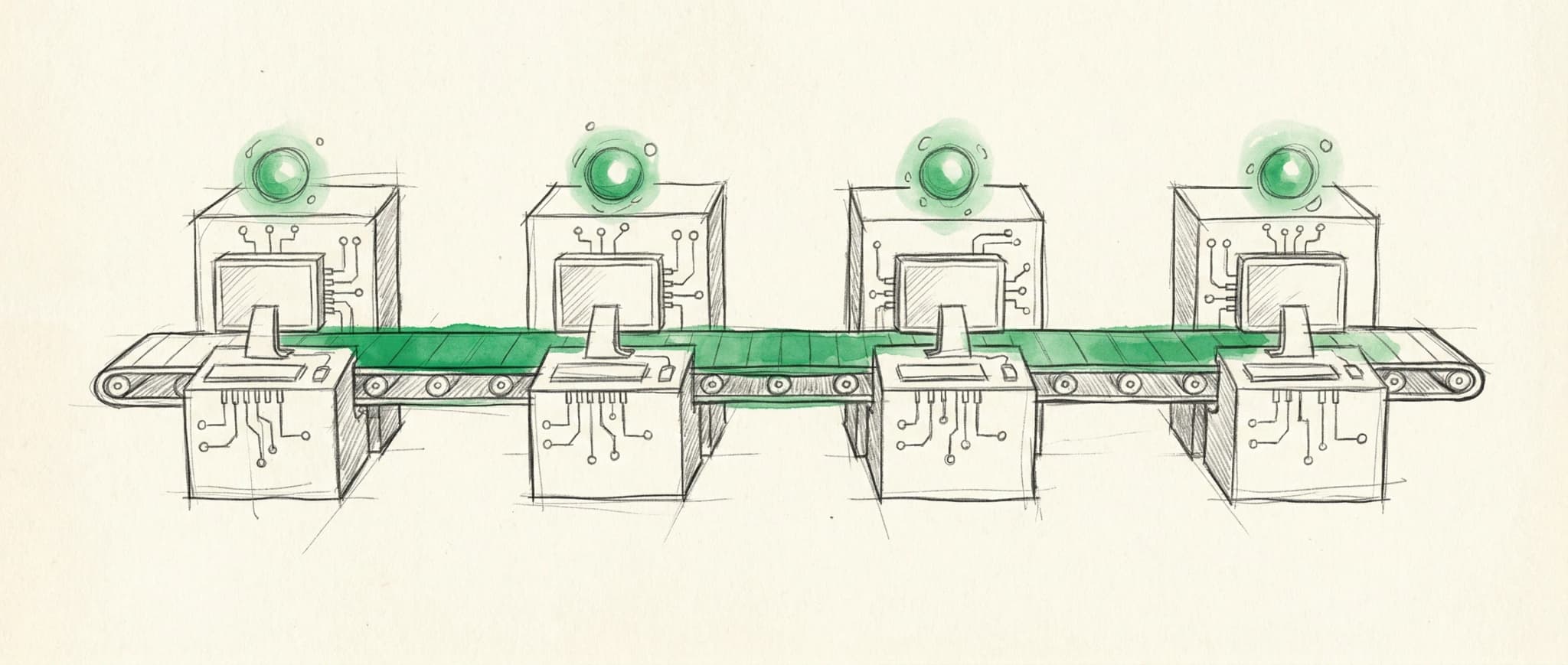

3. Agent architecture

One agent or many? One shot or long-running? What orchestrates them?

Pattern 1: One-shot, single agent (Stripe, Spotify)

The agent receives a prompt, edits code, runs verification, and produces a PR. The surrounding system handles everything else.

- Stripe: "Given a ticket, bug report, or specification, they produce a complete pull request without human intervention during the coding process." One-shot, terminates at PR, max 2 CI rounds.

- Spotify: "Our background coding agent is built to do one thing: take a prompt and perform a code change to the best of its ability. Pushing code, interacting with users on Slack, and even the authoring of prompts are all managed by surrounding infrastructure."20

When to use: Migrations, well-scoped bug fixes, config changes, dependency updates, test generation. Tasks where the end state is precisely describable.

Pattern 2: Long-running, single agent (OpenAI, Cursor)

The agent works for hours or days on a larger task. Plans, executes, verifies, iterates.

- OpenAI: "We regularly see single Codex runs work on a single task for upwards of six hours (often while the humans are sleeping)."21

- Cursor: Agents ran 25-52 hours. "I planned for this project to take an entire quarter. With Cursor long-running agents, that timeline compressed to just a couple days."22

When to use: Feature implementation, large refactors, system overhauls, architectural changes. Tasks where discovery and iteration are required.

Pattern 3: Multi-agent swarm (Cursor self-driving)

Many agents work simultaneously on different parts of the system. A hierarchy of planners and workers coordinates without direct cross-talk.

- Cursor: Root planner → sub-planners → workers. "Workers pick up tasks and are solely responsible for driving them to completion. They're unaware of the larger system. They work on their own copy of the repo." Peaked at ~1,000 commits per hour.23

When to use: Greenfield projects, massive migrations, research prototypes. Requires significant infrastructure investment.

Pattern 4: Fleet (Spotify Fleetshift, background-agents.com)

Same task applied across many repositories in parallel. Each agent works independently on its repository.

- Spotify: "Replacing deterministic migration scripts with an agent that takes instructions from a prompt. All surrounding Fleet Management infrastructure — targeting repositories, opening pull requests, getting reviews, and merging into production — remains exactly the same."24

- background-agents.com: "Updating one repository is a coding agent task. Updating 500 is a fleet task."25

When to use: Org-wide migrations, dependency updates, policy enforcement, linting sweeps.

Decision framework

| Factor | One-shot | Long-running | Multi-agent | Fleet |

|---|---|---|---|---|

| Task complexity | Low-medium | High | Very high | Low per-repo |

| Scope | Single change | Feature/system | Entire project | Cross-repo |

| Infrastructure needed | Minimal | Moderate | Significant | Moderate + targeting |

| Human involvement | Review PR | Review PR | Steer + review | Review PRs |

| Best starting point? | Yes | After one-shot works | Not initially | After one-shot works |

When not to use agents {#when-not-to-use-agents}

Not every task benefits from agent delegation. Avoid agents for:

- Greenfield architecture decisions where the design itself is the work.

- Security-critical changes that require deep domain expertise and careful manual review.

- Exploratory debugging where the problem isn't well-defined yet.

- Tasks requiring external context the agent can't access (conversations, stakeholder intent, political dynamics).

Good starting tasks: Config migration, test generation, dependency updates, well-scoped bug fixes with clear reproduction steps, lint/format enforcement, boilerplate generation.

Getting started

Start with one-shot, single-agent tasks. Pick a well-scoped task (test generation, config migration, dependency update). Get one agent producing good PRs. Then scale horizontally (more tasks) before scaling vertically (longer/more complex tasks).

4. Feedback loops and verification

How do you know the agent's output is correct?

The verification stack

Every organization layers multiple verification mechanisms. The pattern is consistent:

Fast, cheap, local → Slow, expensive, comprehensive

─────────────────────────────────────────────────────────────────

Format/lint → Build → Test → LLM judge → Human review → CI → Deploy

Shift feedback left

Stripe is aggressive about this: "Any lint step that would fail in CI is enforced in the IDE or on a git push. Local linting runs in under five seconds using heuristics." They cap CI to max 2 rounds. "Diminishing marginal returns for an LLM to run many rounds of a full CI loop."26

Spotify runs verifiers before attempting to open a PR: "If one of the verifiers fails, the PR isn't opened and the user is presented with an error message."27

LLM-as-judge

Spotify adds an LLM judge after deterministic verifiers: "Uses the diff of the proposed change and the original prompt, and sends them to an LLM for evaluation." The judge vetoes ~25% of sessions, and the agent can course-correct ~50% of the time after a veto. The most common trigger: "agent going outside the instructions outlined in the prompt."28

Verification types

| Type | What it catches | Who does it |

|---|---|---|

| Format/lint | Style violations, syntax | Automated, pre-commit |

| Build | Compilation errors, type errors | Automated, pre-PR |

| Tests | Functional regressions | Automated, pre-PR or CI |

| LLM judge | Scope creep, prompt violations | Automated, pre-PR |

| Human review | Design, intent, architecture | Human, at PR |

| Visual verification | UI correctness | Agent-driven (Ramp) |

Ramp goes further than most on agent-side verification: "For backend work, it can run tests, review telemetry, and query feature flags. For frontend, it visually verifies its work and gives users screenshots and live previews."29

The human review gate

This is non-negotiable across every organization. One analyst puts it plainly: "The human review gate at the end is not a formality. It is load-bearing... The unglamorous parts of the architecture — the deterministic nodes, the two-round CI cap, the mandatory reviewer — are doing more work than the model is."

Error budget

Not every agent PR will be perfect. Treat a 20-30% "needs revision" rate as normal when starting out. Spotify's LLM judge vetoes ~25% of sessions; the agent can fix ~50% of those on retry.30

If your "needs revision" rate is above 50%, improve context or narrow task scope before scaling. If it's below 10%, you may be giving agents tasks that are too simple to justify the overhead.

Getting started

- Ensure your codebase has lint and build checks that run fast (under 30 seconds).

- Run those checks before the agent opens a PR. Block PR creation on failure.

- If you have tests, run them as part of the agent loop. Agents with test feedback produce better code.

- Add human review for every agent PR. This is not optional.

- Track: What percentage of agent PRs pass review on the first try? That's your quality metric.

5. Prompting and task design

How do you tell the agent what to do?

Prompting principles (cross-company consensus)

| Principle | Source |

|---|---|

| Describe the end state, not the steps. | Spotify: "Claude Code does better with prompts that describe the end state and leave room for figuring out how to get there." |

| State preconditions. Tell the agent when NOT to act. | Spotify: "Agents are eager to act on your prompt, to a fault. It helps to clearly state in the prompt when not to take action." |

| One change per prompt. | Spotify: "Combining several related changes into one elaborate prompt is more likely to get the agent in trouble because it will exhaust its context window or deliver a partial result." |

| Use examples. | Spotify: "Having a handful of concrete code examples heavily influences the outcome." |

| Use constraints, not instructions. | Cursor: "'No TODOs, no partial implementations' works better than 'remember to finish implementations.' Models generally do good things by default. Constraints define their boundaries." |

| Give concrete numbers for scope. | Cursor: "'Generate many tasks' produces a small amount. 'Generate 20-100 tasks' conveys larger scope intent." |

| Use agent feedback to refine prompts. | Spotify: "After a session, the agent itself is in a surprisingly good position to tell you what was missing in the prompt." |

| Treat the agent like a skilled new hire. | Cursor: "Treat the model like a brilliant new hire who knows engineering but not your specific codebase and processes." |

Anti-patterns

- Overly generic: "Make this code better." No verifiable goal.

- Overly specific: Covers every case but breaks on unexpected situations.

- Unscoped freedom: No boundaries leads to agents going deep into "obscure, rarely used features rather than intelligently prioritizing."31

- Missing constraints: "Without explicit instructions and enforced timeouts," agents won't "balance performance alongside other goals."32

6. Human roles and team structure

How are humans and agents organized?

The conductor model

ONA names the emerging role: "From hands-on craftspeople deliberately crafting every line of code, to conductors elegantly orchestrating a symphony of agents operating in parallel."33

Roles observed across organizations

| Role | What they do | Who |

|---|---|---|

| Platform/factory team | Builds and maintains the agent infrastructure, sandbox, API, verification pipeline | Stripe: "Leverage team." Ramp: internal platform. Spotify: Fleet Management team. |

| Prompt author | Writes the prompts that define agent tasks. For migrations, this is a specialized skill. | Spotify: "Writing prompts is hard, and most folks don't have much experience doing it." |

| Agent steersman | Defines constraints, reviews output, feeds corrections back into the system. | OpenAI: "Humans steer. Agents execute." |

| PR reviewer | Reviews agent output for design, intent, architecture. | Stripe: "Though humans review the code, minions write it from start to finish." |

| Context curator | Maintains shared context files, updates rules, promotes discoveries to shared infrastructure. | OpenAI: Doc-gardening agent + human taste enforcement. |

Moving "on the loop"

The consistent framing across all sources: humans move from in the loop (writing code, steering every step) to on the loop (defining intent, setting constraints, verifying outcomes).

- OpenAI: "Humans always remain in the loop, but work at a different layer of abstraction than we used to. We prioritize work, translate user feedback into acceptance criteria, and validate outcomes."

- ONA: "The human role shifts from producing artifacts to specifying intent, defining constraints, and validating outcomes. Less construction. More judgment."34

Non-engineering contributors

Ramp extends agent access beyond engineers: "This also lets builders of all backgrounds contribute with the tooling and setup an engineer would." 30% of merged PRs come from their agent.35

Multiplayer and agent spawning

Ramp treats sessions as multiplayer: "Send your session to any colleague, and they can help take it home." Each person's prompt that causes code changes is attributed to them. Use cases include live QA sessions and having reviewers make changes directly instead of commenting and waiting.

Ramp also gives agents the ability to spawn sub-sessions: "Create tools for starting new sessions and reading status, so it can do research tasks across different repositories or create many smaller PRs for one major task."36

Getting started

You don't need a dedicated platform team to start. You need:

- One person who owns the shared context files and keeps them current.

- One person who reviews agent PRs with the four-check protocol (architecture, convention, intent, drift).

- Everyone learns prompting. It is a skill, and "most folks don't have much experience doing it."

7. Governance and security

How do you keep agents safe?

Runtime governance, not prompt governance

This is the strongest consensus point across all sources. "Governance enforced by a system prompt ('please don't delete files') is a suggestion. Governance enforced at the execution layer — deny lists, scoped credentials, deterministic command blocking — is actual governance. Without it, security teams veto autonomous agents entirely."37

Specific mechanisms

| Mechanism | What it does | Who does it |

|---|---|---|

| Isolated environment | Agent can't reach production or the internet | Stripe, Ramp, Spotify |

| Scoped credentials | Agent gets only the permissions it needs, not developer credentials | ONA: "Autonomous agents need unique identities and authentication" |

| User-attributed PRs | PR opened under the user's GitHub token, not the app's | Ramp: "Do not have the app itself open PRs — that creates a vector for unreviewed code" |

| Deny lists | Specific commands/paths blocked at the execution layer | background-agents.com |

| Audit trails | Everything the agent does is logged and queryable | All organizations |

Security warnings

ONA's CTO on sandbox assumptions: "There's a lot of assumptions about AppArmor and SELinux... they're just labels... There's a lot of different really different technologies under the same moniker that do not provide the same kind of guarantees."38

On API keys: "I do not recommend taking a bunch of API keys, sticking them in an env file... The agent now has access to a raw API key that it might put somewhere you don't want it to show up."

On agents as threat actors: "You're giving an intelligence that will tenaciously follow a particular goal you've given it, possibly to the detriment of what you had in mind... so much smarter than the usual human attacker in finding creative ways to bypass restrictions."

Getting started

- Never give agents production credentials or internet access unless strictly required.

- If masking AI usage (for policy reasons), use

.git/info/excludefor context files, standard branch naming, and no AI tool names in commit messages. - Open PRs under the developer's token, not a bot account.

- Log everything the agent does. You will need these logs for debugging, evaluation, and compliance.

8. Invocation and triggers

How does work get to the agent?

Manual invocation surfaces

| Surface | Example | Organization |

|---|---|---|

| Slack | Tag the bot in a thread; it accesses the full thread context | Stripe (primary), Ramp |

| CLI | Command-line for power users | Stripe, Spotify |

| Web UI | Start, monitor, and iterate on sessions | Ramp, Stripe |

| IDE | Cursor, Claude Code, Windsurf — developer initiates from editor | All |

| Chrome extension | Highlight UI elements, use DOM/React tree instead of screenshots | Ramp |

| Internal platforms | Docs platform, feature flag platform, ticketing UI | Stripe: "Making it effortless to kick off an agent from wherever the relevant context already exists" |

Autonomous triggers

| Trigger type | What it does | Example |

|---|---|---|

| Scheduled | Timer-based recurring tasks | Dependency updates, lint sweeps, coverage enforcement. Dead code trimming, metrics threshold monitoring, Sentry error triage. |

| Event-driven | Fires on system events | PR opened → agent reviews. CVE published → agent patches. Alert fired → agent investigates. |

| Backlog automation | Agent picks tasks from the bottom of the backlog | ONA: "Once every hour wakes up, looks at something at the bottom of the backlog, assesses if it can meaningfully fix it."39 |

Getting started

Start with manual invocation from the IDE. This is where most teams already are. Graduate to Slack-based invocation when you want async delegation. Add scheduled triggers only after the one-shot pattern is reliable.

Ramp's repo classifier is a good pattern for Slack bots: "Take the user's incoming message, thread context, and channel name. Give that to a fast model along with descriptions of every repository. Include an 'unknown' option so the AI can ask if unsure."40

9. Adoption strategy

How do you get people to use it?

The false summit

The single most important insight across all sources: individual developer speed does not equal organizational velocity.

"You rolled out coding agents. Engineers are faster. PRs flood in. Yet, cycle time doesn't budge. DORA metrics are flat. The backlog grows. Because gains are compounding with the individual, not the organization."41

ONA frames this as a manufacturing insight: "When one station speeds up by an order of magnitude and the rest do not, inventory piles up... pull requests waiting for review, unmerged branches, unreleased features." And: "Cheap progress is not the same as value. It is easy to do the first 80% when execution is cheap. The last 20% — verification, integration, release — is where value is created. Until work runs all the way through the system and reaches production, it is inventory, not output."42

Organic over mandated

Ramp's adoption curve was "vertical" because they "didn't force anyone to use Inspect over their own tools. We built to people's needs, created virality loops through letting it work in public spaces, and let the product do the talking."43

Start with pain, not technology

ONA's approach: "If you find the bottleneck and you find the team to whom this is really pain... 'here's how it's causing you pain, here's what we can do about it,' you got yourself a win-win."44

Identify your taste makers

"Who are your taste makers? Who are the ones most likely to not only accept this but embrace this new way of working?"45

Track the right metric

Ramp tracks sessions resulting in merged PRs as the most important metric. They recommend building a statistics page showing organization usage, this metric over time, and a live "humans prompting" count.46

Measuring autonomy: time between disengagements

ONA borrows from self-driving cars: "time between disengagements" measures how long an agent works independently before a human must intervene. Seconds (autocomplete) → minutes (coding agents) → hours (background agents) → days (full autonomy). "Extending this interval translates directly into sweeping productivity gains."47

This is a useful internal metric: how long can your agents work before a human has to step in? Track it and work to extend it.

10. Entropy and maintenance

How do you keep a factory running?

The garbage collection problem

OpenAI discovered this firsthand: "Full agent autonomy also introduces novel problems. Codex replicates patterns that already exist in the repository — even uneven or suboptimal ones. Over time, this inevitably leads to drift." Initially their team spent every Friday (20% of the week) cleaning up "AI slop."48

The solution: mechanical enforcement + recurring cleanup

- Golden principles: Opinionated, mechanical rules encoded in the repo. Linters and CI enforce them automatically.

- Doc-gardening agent: Scans for stale or obsolete documentation that doesn't reflect real code behavior. Opens fix-up PRs.

- Quality grades: Each product domain and architectural layer gets a quality score, tracked over time. Background Codex tasks scan for deviations and open targeted refactoring PRs.

- Architectural invariants: "Enforce invariants, not implementations... require Codex to parse data shapes at the boundary, but are not prescriptive on how that happens."

- Custom lints with agent-readable error messages: "We statically enforce structured logging, naming conventions, file size limits. Because the lints are custom, we write the error messages to inject remediation instructions into agent context."49

Knowledge return loop

Spotify closes the loop: after a session, the agent can report what was missing in the prompt; humans use that feedback to refine future prompts. "After a session, the agent itself is in a surprisingly good position to tell you what was missing in the prompt. Use that feedback to refine future prompts."50

11. Failure modes and mitigations

What goes wrong and how do you handle it?

| Failure mode | Severity | Mitigation | Source |

|---|---|---|---|

| Agent fails to produce a PR | Low | Accept small failure rate; manual fallback | Spotify |

| PR produced but fails CI | Medium | Verification loop before PR; verifiers abstract build complexity | Spotify |

| PR passes CI but is functionally wrong | High | LLM-as-judge evaluates diff vs. prompt; vetoes ~25% of sessions | Spotify |

| Token paralysis (too many tools) | Medium | Curate ~15 tools from 400+; select most relevant per run | Stripe |

| Context window exhaustion | Medium | One change per prompt; static prompts; limited tools | Spotify |

| Agent goes outside scope | High | Preconditions in prompt; judge; narrow agent scope | Spotify, Cursor |

| Unbounded CI retries | Medium | Cap at 2 rounds; fix locally first | Stripe |

| Security: raw API keys in env | High | Use scoped credentials, IAM roles, not env vars | ONA |

| Drift and entropy over time | Cumulative | Golden principles, doc-gardening, quality grades, recurring cleanup | OpenAI |

| False summit (individual speed, org stagnation) | Strategic | Fix the system, not just agents; address review, CI, release bottlenecks | ONA, background-agents.com |

Rollback strategy

If an agent PR is merged and causes issues: revert via standard git revert. For agent-induced drift: use the doc-gardening pattern (Section 10) to detect and fix. Never give agents direct access to production deployment. The PR → review → merge → deploy pipeline is the safety net.

12. Key technologies and tools

What the organizations actually use.

| Category | Technologies |

|---|---|

| Agent frameworks | Claude Code / Claude Agent SDK (Spotify), Codex / Codex CLI with GPT-5 (OpenAI), Goose fork (Stripe), OpenCode (Ramp), Cursor agent harness |

| Sandbox/compute | AWS EC2 devboxes (Stripe), Modal VMs + snapshots (Ramp), containerized execution (Spotify), large Linux VM (Cursor) |

| API/session management | Cloudflare Durable Objects + Agents SDK (Ramp) |

| Tool protocol | MCP (Model Context Protocol) — used by Stripe (Toolshed, 400+ tools), Spotify (verify, git, bash), Ramp (custom tools + MCPs) |

| Observability | Datadog, Sentry (Ramp), GCP + MLflow (Spotify), local observability stack with LogQL/PromQL (OpenAI) |

| Source code search | Sourcegraph (Stripe) |

| CI/CD | Buildkite (Ramp), GitHub Actions, internal CI (Stripe, Spotify) |

| Clients | Slack (Stripe, Ramp), web UI (Ramp, Stripe), Chrome extension (Ramp), VS Code / Cursor |

| Context files | AGENTS.md, CLAUDE.md, copilot-instructions.md, .cursor/rules/ |

13. Metrics from the field

What results are these organizations actually seeing?

| Organization | Metric | Value |

|---|---|---|

| Spotify | Merged AI-generated PRs | 1,500+ |

| Spotify | Time savings on migrations vs. manual | 60-90% |

| Spotify | Migrations using Claude Code | ~50 |

| Spotify | PRs from Fleetshift automation | ~50% of all merged PRs since mid-2024 |

| Stripe | PRs merged per week | 1,000+ → 1,300 |

| Stripe | Devbox spin-up time | 10 seconds |

| Stripe | Local lint execution | Under 5 seconds |

| Ramp | PRs from agent (Inspect) | ~30% of all merged PRs |

| Ramp | Time to reach that adoption level | A couple months |

| OpenAI | Codebase size (zero manual code) | ~1 million lines |

| OpenAI | PRs per engineer per day | 3.5 average |

| OpenAI | Team size | Started with 3, grew to 7 engineers |

| OpenAI | Build time estimate vs. manual | ~1/10th the time |

| Cursor | Peak commit throughput | ~1,000 commits/hour |

| Cursor | Long-running agent durations | 25-52 hours |

14. Cost and budget

Rough cost ranges for different configurations. These vary significantly by model, task complexity, and provider pricing.

| Configuration | Estimated cost | Notes |

|---|---|---|

| Local agents (Cursor, Claude Code) | ~$20-100/user/month | Subscription + API usage. Depends on task volume. |

| Cloud-hosted VMs (Modal, EC2) | ~$100-500/month | Depends on session count, duration, and VM size. Ramp: "effectively free to run" per session on Modal. |

| API costs per agent task | ~$0.01-1.00 per task | Depends on context size, model, and number of tool calls. One-shot tasks are cheapest. |

| LLM-as-judge (per PR) | ~$0.01-0.10 | Small context (diff + prompt). Fast model sufficient. |

Start local. The cheapest path is local agents on developer machines with worktree isolation. Measure before investing in cloud infrastructure. The ROI case writes itself once you can show agent PRs merging successfully — Ramp's 30% PR share and Spotify's 60-90% time savings on migrations are the benchmarks.

15. Maturity model

Where to start, where to go.

Phase 0: Prerequisites (day 1)

Before running any agent, verify these basics:

- Codebase has lint that runs in under 30 seconds

- Codebase has a build command that succeeds

- Codebase has at least some test coverage

- You have an AI coding tool installed (Claude Code, Cursor, Windsurf, etc.)

- You can create a git worktree:

git worktree add ../test-worktree -b test/agent-trial - You can describe one well-scoped task (test generation, config change, dependency update)

If any of these fail, fix them first. A fast, reliable lint/build/test cycle is the single most important prerequisite. Without it, agents produce code that can't be verified.

Phase 1: Local agents, manual invocation (weeks 1-2)

What you need: A codebase with lint/build/test. An AI coding tool (Claude Code, Cursor, etc.). One person writing context files.

What you do:

- Write AGENTS.md / CLAUDE.md (under 200 lines)

- Use worktrees for isolation: one worktree per task

- Pick a well-scoped task. Run the agent. Review the PR.

- Document what the agent got wrong → update context files

- Track: did the context improve agent output?

Exit criteria: Agent PRs pass review on first try >50% of the time. Context files reflect actual codebase conventions.

Phase 2: Shared infrastructure, team adoption (weeks 3-4)

What you need: Phase 1 validated. Team willing to try.

What you do:

- Distribute context overlay to the team (gitignored or committed, depending on repo access)

- Document the workflow: how to create a worktree, run an agent, submit a PR

- Cross-tool consistency test: multiple people run comparable tasks, compare output

- Start the "what I learned" ritual: share one discovery per week

- Define ownership zones so agents don't cross boundaries

Exit criteria: Multiple people use agents on real work. PR review protocol handles agent output. Context files maintained by >1 person.

Phase 3: Background agents, async delegation (months 2-3)

What you need: Phase 2 validated. Cloud infrastructure or long-running local capability.

What you do:

- Move to cloud-hosted execution (Modal, EC2, dev containers)

- Add Slack or web invocation: delegate a task, walk away, review later

- Add verification loop: lint → build → test → block PR on failure

- Track: sessions resulting in merged PRs (the Ramp metric)

- First scheduled agents: dependency updates, lint sweeps

Exit criteria: Agents run without developer machines. >20% of PRs come from agents. Review pipeline handles the volume.

Phase 4: Factory at scale (months 3-6)

What you need: Phase 3 validated. Dedicated platform attention.

What you do:

- Add LLM-as-judge for scope compliance

- Fleet runs across multiple repositories

- Governance at runtime: scoped credentials, audit trails, deny lists

- Agent spawns sub-agents for research or parallel PRs

- Recurring cleanup agents: doc-gardening, quality grades, dead code trimming

- Statistics dashboard: merged PRs, quality metrics, adoption trends

Exit criteria: Organization produces meaningful output through agents. System bottlenecks shift from code production to review, testing, and release. Engineers are "on the loop."

Key principles (cross-company)

- The walls matter more than the model. Deterministic guardrails do more for reliability than model selection.

- Start narrow. One agent, one task, one PR. Expand after it works.

- Verification before PR, not after. Shift feedback left. Don't waste human review cycles on code that fails lint.

- Human review is load-bearing, not ceremonial. Every organization keeps this gate.

- Context as map, not manual. Small entry point, progressive disclosure, structured knowledge base.

- Fix the system, not just the agents. Individual speed without system flow creates inventory, not output.

- Own your tooling. "Owning the tooling lets you build something significantly more powerful than an off-the-shelf tool will ever be. After all, it only has to work on your code."51

- Adopt organically. Build to people's pain, not to technology hype. Let the product do the talking.

- Accept some error rate. "The ideal efficient system accepts some error rate, but a final 'green' branch is needed where an agent regularly takes snapshots and does a quick fixup pass before release."52

- The discipline shifts, it doesn't disappear. "Building software still demands discipline, but the discipline shows up more in the scaffolding rather than the code."53

Sources: 13 articles and transcripts from Stripe, Spotify, Ramp, OpenAI, Cursor, and background-agents.com/ONA, collected and analyzed March 2026. Every claim in this document cites its source.

Footnotes

-

Alistair Gray, "Minions: Stripe's one-shot, end-to-end coding agents — Part 2," Stripe Engineering Blog, 2026. https://stripe.dev/blog/minions-stripes-one-shot-end-to-end-coding-agents-part-2 ↩

-

Spotify Engineering, "Spotify's journey with our background coding agent," engineering.atspotify.com, 2025. https://engineering.atspotify.com/2025/11/spotifys-journey-with-our-background-coding-agent ↩

-

Ramp Engineering, "Why We Built Our Background Agent," Ramp Builders Blog, 2025. https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

OpenAI Engineering, "Engineering with Codex," OpenAI Blog, 2026. ↩

-

Alistair Gray, "Minions: Stripe's one-shot, end-to-end coding agents," Stripe Engineering Blog, February 2026. https://stripe.dev/blog/minions-stripes-one-shot-end-to-end-coding-agents ↩

-

Ramp Engineering, "Why We Built Our Background Agent," Ramp Builders Blog. https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

Spotify Engineering, "Feedback loops for background coding agents (part 3)," engineering.atspotify.com, December 2025. https://engineering.atspotify.com/2025/12/feedback-loops-background-coding-agents-part-3 ↩

-

OpenAI Engineering, "Engineering with Codex," OpenAI Blog, 2026. ↩

-

Cursor, "Towards self-driving codebases," Cursor Blog, 2025. https://cursor.com/blog/self-driving-codebases ↩

-

Christian Weichel (ONA CTO), talk transcript, "Self-driving codebase primitives," background-agents.com video series, January 2026. https://background-agents.com/ ↩

-

Ramp Engineering, "Why We Built Our Background Agent." https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

Ibid. ↩

-

OpenAI Engineering, "Engineering with Codex," OpenAI Blog, 2026. ↩

-

Alistair Gray, "Minions: Stripe's one-shot, end-to-end coding agents," Stripe Engineering Blog. https://stripe.dev/blog/minions-stripes-one-shot-end-to-end-coding-agents ↩

-

Spotify Engineering, "Context engineering for background coding agents (part 2)," engineering.atspotify.com, November 2025. https://engineering.atspotify.com/2025/11/context-engineering-background-coding-agents-part-2 ↩

-

Spotify Engineering, "Context engineering for background coding agents (part 2)." https://engineering.atspotify.com/2025/11/context-engineering-background-coding-agents-part-2 ↩

-

Alistair Gray, "Minions: Stripe's one-shot, end-to-end coding agents — Part 2," Stripe Engineering Blog. https://stripe.dev/blog/minions-stripes-one-shot-end-to-end-coding-agents-part-2 ↩

-

OpenAI Engineering, "Engineering with Codex," OpenAI Blog, 2026. ↩

-

Spotify Engineering, "Context engineering for background coding agents (part 2)." https://engineering.atspotify.com/2025/11/context-engineering-background-coding-agents-part-2 ↩

-

Spotify Engineering, "Feedback loops for background coding agents (part 3)." https://engineering.atspotify.com/2025/12/feedback-loops-background-coding-agents-part-3 ↩

-

OpenAI Engineering, "Engineering with Codex," OpenAI Blog, 2026. ↩

-

Cursor, "Scaling agents," Cursor Blog, 2025. https://cursor.com/blog/scaling-agents ↩

-

Cursor, "Towards self-driving codebases," Cursor Blog. https://cursor.com/blog/self-driving-codebases ↩

-

Spotify Engineering, "Spotify's journey with our background coding agent." https://engineering.atspotify.com/2025/11/spotifys-journey-with-our-background-coding-agent ↩

-

background-agents.com, "The Self-Driving Codebase: Background Agents and the Next Era of Software Delivery." https://background-agents.com/ ↩

-

Alistair Gray, "Minions: Stripe's one-shot, end-to-end coding agents." https://stripe.dev/blog/minions-stripes-one-shot-end-to-end-coding-agents ↩

-

Spotify Engineering, "Feedback loops for background coding agents (part 3)." https://engineering.atspotify.com/2025/12/feedback-loops-background-coding-agents-part-3 ↩

-

Spotify Engineering, "Feedback loops for background coding agents (part 3)." https://engineering.atspotify.com/2025/12/feedback-loops-background-coding-agents-part-3 ↩

-

Ramp Engineering, "Why We Built Our Background Agent." https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

Spotify Engineering, "Feedback loops for background coding agents (part 3)." https://engineering.atspotify.com/2025/12/feedback-loops-background-coding-agents-part-3 ↩

-

Cursor, "Towards self-driving codebases." https://cursor.com/blog/self-driving-codebases ↩

-

Ibid. ↩

-

Christian Weichel, "The Software Conductor," ONA Blog, January 2026. https://ona.com/ ↩

-

Christian Weichel, "Industrializing Software Development," ONA Blog, January 2026. https://ona.com/ ↩

-

Ramp Engineering, "Why We Built Our Background Agent." https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

Ramp Engineering, "Why We Built Our Background Agent." https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

background-agents.com, "The Self-Driving Codebase." https://background-agents.com/ ↩

-

Christian Weichel, "Self-driving codebase primitives," background-agents.com video series, 2026. https://background-agents.com/ ↩

-

Christian Weichel, background-agents.com video series, 2026. https://background-agents.com/ ↩

-

Ramp Engineering, "Why We Built Our Background Agent." https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

background-agents.com, "The Self-Driving Codebase." https://background-agents.com/ ↩

-

Christian Weichel, "Industrializing Software Development," ONA Blog, 2026. https://ona.com/ ↩

-

Ramp Engineering, "Why We Built Our Background Agent." https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

Christian Weichel, background-agents.com video series, 2026. https://background-agents.com/ ↩

-

Ibid. ↩

-

Ramp Engineering, "Why We Built Our Background Agent." https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

Christian Weichel, "The Software Conductor," ONA Blog, 2026. https://ona.com/ ↩

-

OpenAI Engineering, "Engineering with Codex," OpenAI Blog, 2026. ↩

-

OpenAI Engineering, "Engineering with Codex," OpenAI Blog, 2026. ↩

-

Spotify Engineering, "Context engineering for background coding agents (part 2)." https://engineering.atspotify.com/2025/11/context-engineering-background-coding-agents-part-2 ↩

-

Ramp Engineering, "Why We Built Our Background Agent." https://builders.ramp.com/post/why-we-built-our-background-agent ↩

-

Cursor, "Towards self-driving codebases." https://cursor.com/blog/self-driving-codebases ↩

-

OpenAI Engineering, "Engineering with Codex," OpenAI Blog, 2026. ↩